Cleaning Pasted Configuration Data to Prevent System Errors

Messy configuration data is one of the fastest ways to introduce silent failures into a system. A single misplaced delimiter, duplicate entry, or hidden character can break scripts, confuse parsers, or trigger incorrect outputs. This problem often starts with something simple, copying and pasting data from emails, spreadsheets, or logs into configuration files without proper cleanup.

Developers and administrators work with raw data daily. Logs, environment variables, API responses, and server lists all pass through manual workflows at some point. Without structure, these datasets quickly become inconsistent. Over time, small inconsistencies lead to larger system errors that are difficult to trace back.

Quick Summary

- Unstructured pasted data often contains duplicates, formatting issues, and hidden errors

- Cleaning configuration data improves reliability and reduces debugging time

- Simple tools and structured workflows help standardize inputs

- Consistent formatting practices support better system security and performance

Why Pasted Data Causes Hidden System Failures

Pasted configuration data rarely arrives in a clean format. It might include trailing spaces, inconsistent line breaks, or duplicate entries. These issues are not always visible. They can exist quietly until a system processes the data and produces unexpected results.

Consider a scenario where a list of IP addresses is pasted into a firewall rule set. If duplicates exist, the system may waste processing cycles. If formatting is inconsistent, some entries may fail validation. In more complex environments, such issues can cascade into outages or incorrect routing decisions.

Many professionals overlook these risks because the data looks correct at first glance. The problem is not what is visible. The problem is what remains hidden beneath the surface of pasted text.

Start by Removing Duplicate Entries

Duplicate entries are one of the most common issues found in pasted configuration data. They often occur when merging lists from different sources or copying repeated blocks from logs. Even a small duplication can create unnecessary overhead or conflicting instructions.

Using tools designed to remove duplicate lines helps ensure that each entry is unique and intentional. This step is simple, yet it has a strong impact on system clarity. It reduces noise and makes debugging more straightforward.

Deduplication is especially important in environments where configurations are processed line by line. Examples include firewall rules, DNS records, and environment variable files. Clean inputs reduce the risk of redundancy and conflict.

Understanding File Structure in Unix Systems

Configuration data often lives inside structured files such as .env files, YAML documents, or JSON configurations. Understanding how these files are parsed is key to avoiding errors. Systems expect consistent formatting, predictable delimiters, and properly structured lines.

A deeper understanding of how these files behave can be gained through resources like Unix file management basics. These concepts help clarify why even small formatting inconsistencies can break an entire configuration.

Whitespace, indentation, and line breaks all matter. A misplaced space can change how a parser interprets a value. In some cases, it can even cause a configuration file to fail entirely.

Split and Structure Raw Data Before Use

Large blocks of pasted data are often difficult to manage in their original form. They may contain multiple values in a single line or inconsistent separators. Before using this data, it should be broken down into structured components.

One effective approach is to split text into lines and standardize the format. This allows each value to exist on its own line, making it easier to validate and process. Structured data is easier to read, edit, and debug.

Breaking data into smaller units also supports automation. Scripts and tools can process line-based inputs more reliably than large unstructured blocks. This simple step improves both accuracy and efficiency.

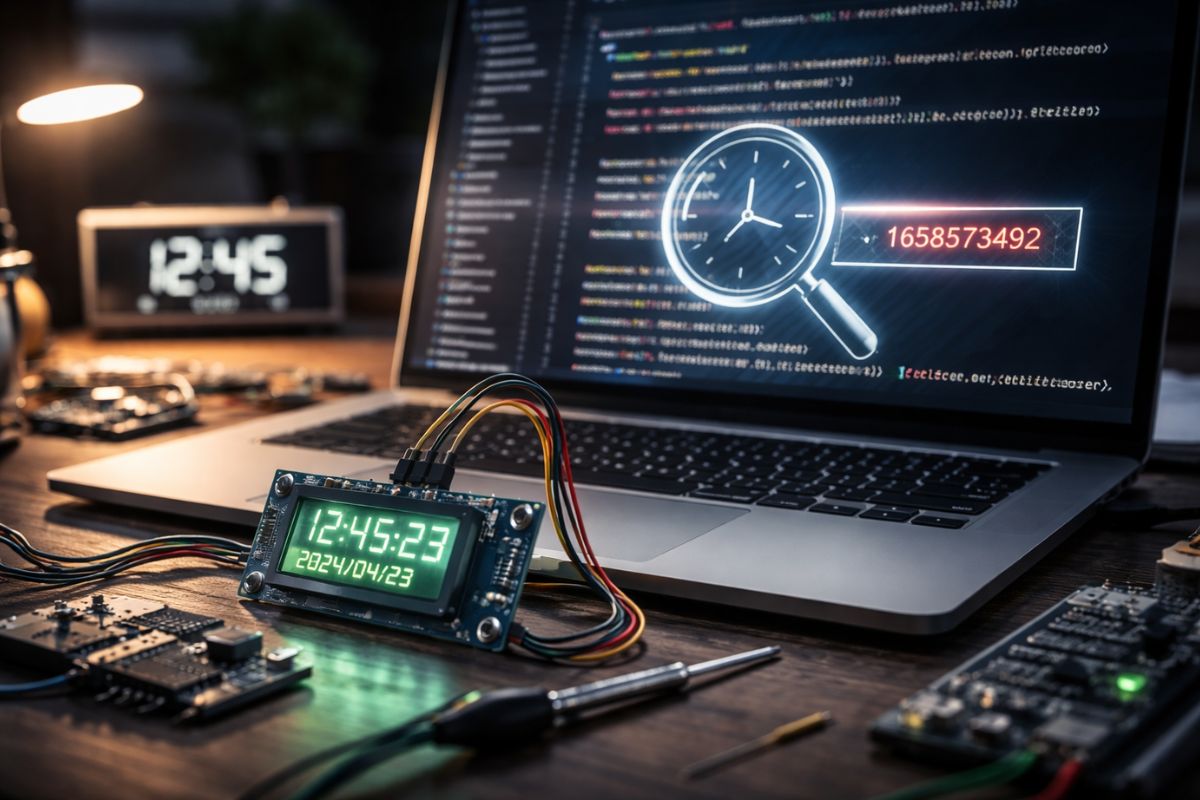

Debugging Configuration Errors in Real Time

When systems fail, configuration errors are often one of the first areas to investigate. Debugging becomes much easier when the data is clean and structured. Without cleanup, developers must spend additional time identifying formatting issues that could have been prevented earlier.

Real-world debugging scenarios are explored in detail in API timestamp debugging cases. These examples highlight how small data inconsistencies can lead to significant system issues.

Clean configuration data reduces the complexity of troubleshooting. It allows developers to focus on logic and system behavior instead of formatting problems.

Common Data Issues Found in Pasted Configurations

Not all data issues are obvious. Many of them are subtle and require careful inspection. Below are common problems that appear in pasted configuration data:

- Trailing spaces at the end of lines

- Mixed delimiter usage such as commas and semicolons

- Duplicate entries across merged datasets

- Hidden characters from copied content

- Inconsistent line breaks or encoding formats

Each of these issues can introduce unexpected behavior. Addressing them early prevents downstream errors and improves system reliability.

Building a Repeatable Data Cleaning Workflow

Cleaning configuration data should not be a one-time task. It should be part of a repeatable workflow that developers and administrators follow consistently. This approach ensures that every dataset entering the system meets a minimum quality standard.

Here is a practical workflow that can be applied across different environments:

1. Paste raw data into a temporary workspace

2. Remove duplicate entries and redundant lines

3. Standardize delimiters and formatting

4. Split data into structured lines

5. Validate the cleaned data before applying it

This structured approach reduces errors and improves consistency across systems. It also saves time during debugging and maintenance.

How Clean Data Improves System Security

Clean configuration data does more than improve performance. It also enhances system security. Poorly formatted data can create vulnerabilities. For example, incorrect firewall rules or malformed access lists can expose systems to unauthorized access.

Security frameworks emphasize the importance of proper data handling and validation. Guidelines from usability and security standards highlight how structured inputs reduce risks and improve system behavior.

Consistent formatting ensures that security rules are applied correctly. It prevents accidental gaps that attackers could exploit. In this way, data cleanliness becomes a foundational part of system defense.

Visual Comparison of Clean vs Messy Data

| Aspect | Messy Data | Clean Data |

|---|---|---|

| Structure | Inconsistent formatting | Standardized lines |

| Duplicates | Repeated entries | Unique values only |

| Readability | Difficult to scan | Easy to review |

| Error Risk | High | Low |

Small Habits That Prevent Big Issues

Data cleaning does not require complex tools or advanced skills. It starts with small habits that become part of daily workflows. These habits help maintain consistency and reduce errors over time.

For example, always reviewing pasted data before saving a configuration file can prevent many issues. Using tools to format and validate inputs ensures that the data meets expected standards. These actions take only a few minutes but save hours of troubleshooting later.

Another useful habit is documenting data sources. Knowing where data comes from helps identify potential inconsistencies. It also makes it easier to track changes and maintain accuracy.

Creating Reliable Systems Through Clean Inputs

Reliable systems depend on reliable data. Configuration files act as the foundation for many processes, from server management to application behavior. If the foundation is unstable, the system cannot perform as expected.

Clean data supports better automation, clearer logic, and more predictable outcomes. It reduces the likelihood of unexpected failures and improves overall system performance. Developers can work with confidence, knowing that their inputs are consistent and accurate.

By integrating simple cleaning practices into daily workflows, teams can significantly reduce system errors. The result is a more stable environment that is easier to manage and maintain.

Turning Raw Inputs Into Stable Configurations

Raw data is rarely perfect. It often arrives in a format that is not ready for immediate use. Transforming this data into a structured format is a key step in maintaining system reliability. This process involves cleaning, organizing, and validating each piece of information before it is applied.

With the right approach, even large datasets can be managed effectively. The goal is not perfection, but consistency. Structured data allows systems to operate smoothly and reduces the risk of unexpected behavior.

Over time, these practices become second nature. They form the backbone of efficient workflows and reliable systems. Clean configuration data is not just a technical detail. It is a critical factor in building stable and secure environments.

Post Comment